The room has no windows. No natural light. The temperature is held within a degree or two of a fixed target, around the clock, every day of the year. Thousands of servers hum at a frequency you feel in your chest before you hear it. Somewhere in this building in a city you might know, under a name you almost certainly don’t a meaningful slice of the internet you use every day is being kept alive.

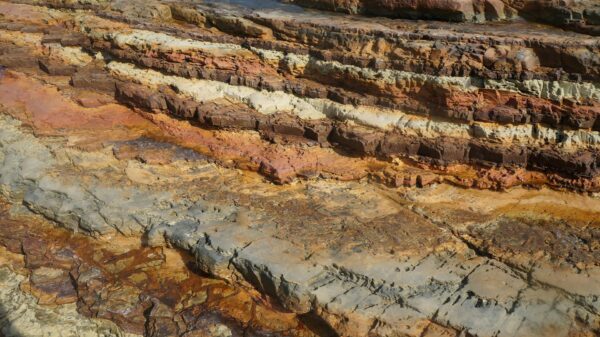

Most people picture the internet as something that floats. Cloud storage, wireless signals, invisible data zipping between satellites. The reality is the opposite. The internet is relentlessly physical: miles of copper and fiber-optic cable buried underground, junction points the size of warehouses, and cooling systems that consume more electricity than some small towns. And all of it sits on a foundation of buildings that most of the public has never heard of and will never see.

These are data centers. And the gap between what they are and what people assume they are is one of the more consequential blind spots in how we think about modern infrastructure.

What a Data Center Actually Is

A data center is, at its core, a building engineered around servers. Racks of them, stacked floor to ceiling, each one a physical machine running processes that somewhere, to someone, feel instantaneous. When you send an email, stream a film, or ask an AI assistant a question, the response travels through multiple data centers before it reaches you. The routing can touch facilities on different continents within a fraction of a second.

The buildings themselves are extraordinary feats of engineering paranoia. Multiple independent power feeds from the grid. Diesel generators that can take over in seconds if the main supply fails. Uninterruptible power supplies, banks of batteries, that bridge the gap between the two. Cooling systems layered inside cooling systems. Security that rivals government facilities, with biometric access controls and perimeter sensors. The design philosophy is built around one word: redundancy. If one thing fails, something else is already running.

And here’s the strange part: all of that engineering, all of that redundancy, and the single most common cause of a major outage is still water. A burst pipe. A failed cooling unit. A roof drainage problem that went unnoticed. Water and electricity have been enemies since the first circuit was soldered, and data centers, for all their sophistication, are not immune to that particular antagonism.

Why Flooding Is the Industry’s Quiet Nightmare

A flooded server room doesn’t go dark instantly, in most cases. The systems are designed to shut down gracefully, rerouting traffic and spinning down equipment in a controlled sequence. But the aftermath is where the real problem lives. Servers that have been exposed to water, even briefly, even at low levels, cannot simply be dried out and restarted. They have to be inspected individually and often replaced. The supply chains for specialized server hardware are long. Lead times for replacement components can stretch into weeks or months.

Meanwhile, the services that ran on those machines don’t disappear. They’re rerouted. Other data centers absorb the load. That’s the theory. In practice, when a major facility goes down, the redistribution isn’t always seamless. Latency increases. Some services degrade. Occasionally, if the affected center held something that wasn’t fully replicated elsewhere, something goes dark entirely.

The internet was designed to route around damage; that principle is baked into its architecture from its earliest days, when ARPANET engineers in the late 1960s built the network explicitly so that a nuclear strike on any one node wouldn’t kill the whole system. But “designed to route around damage” and “immune to damage” are not the same sentence. The former is true. The latter has never been.

The Geography Nobody Talks About

Here’s the thing. The internet has a hometown, and it’s a suburb of Washington, D.C., Northern Virginia. Ashburn specifically hosts more data center capacity per square mile than anywhere else on Earth, a density so extreme that locals call the area “Data Center Alley”. That didn’t happen by accident. Undersea cable landing points, cheap land, a stable grid, and one early decision by a major provider created a gravitational pull that’s been compounding ever since. You build where the pipes already run.

That concentration is a feature and a bug simultaneously. Clustering infrastructure in stable, well-connected locations makes sense operationally. But it also means that a regional weather event, a grid failure, or a serious enough flood in a specific geography can cascade in ways that feel disproportionate to the physical area affected.

Cooling is the other underappreciated factor. Servers generate enormous heat. Moving that heat out of the building requires either massive air conditioning systems or, increasingly, water-based cooling that circulates liquid directly around the hardware. The efficiency gains from liquid cooling are real and significant. So is the irony that the solution to servers overheating involves routing water through the same rooms where water is the primary disaster scenario.

What Fragility Actually Looks Like

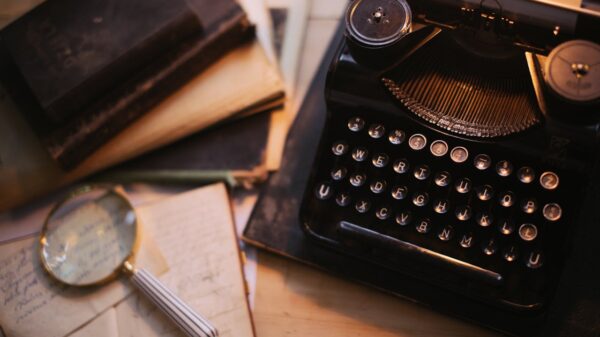

The internet doesn’t feel fragile. That’s the entire point of how it was built. It feels immediate, abundant, always-on. The engineering underneath it is specifically designed to make the fragility invisible to the end user for as long as possible.

But invisible is not the same as absent. Think about what’s actually holding this together: rotating shift workers, cooling systems that need constant babysitting, and hardware supply chains that were already strained before anyone added “When a flooding event strikes a major facility, operators must source replacement hardware through supply chains that are often already under strain, adding urgent, large-volume orders that can take weeks or months to fulfill.. A single flooded room in the right facility, at the wrong moment, has caused outages affecting millions of users and taken months to fully resolve.

The cloud is, in the end, somebody’s basement. A very sophisticated, heavily engineered, carefully guarded basement. But a basement nonetheless.

If that framing makes the internet feel slightly less magical and slightly more mortal, that’s probably the more accurate way to think about it.

This article was created with AI assistance and reviewed by the author. The review included fact-checking, clarity edits, references, and sourcing of images